If you want to train machine learning models, R offers thousands of packages to try out. However, most of these are written by random students and academics, so they are not proper standardized. It can looks like this:

So, it’s a nightmare trying out a lot of models or (learning) algorithms to predict a specific outcome of interest. What we really need is a kind of meta-model package that wraps the others for you, and has a standardized interface. There are such packages, mainly people have been using caret for years. However, caret is not part of tidyverse and is quite old, so Max Kuhn decided to make a new version for tidyverse: tidymodels. I have previously used caret and was happy about it, so of course I was excited to try out a new and improved version. First off, there is a good official getting started tutorial, and several other good ones made by others.

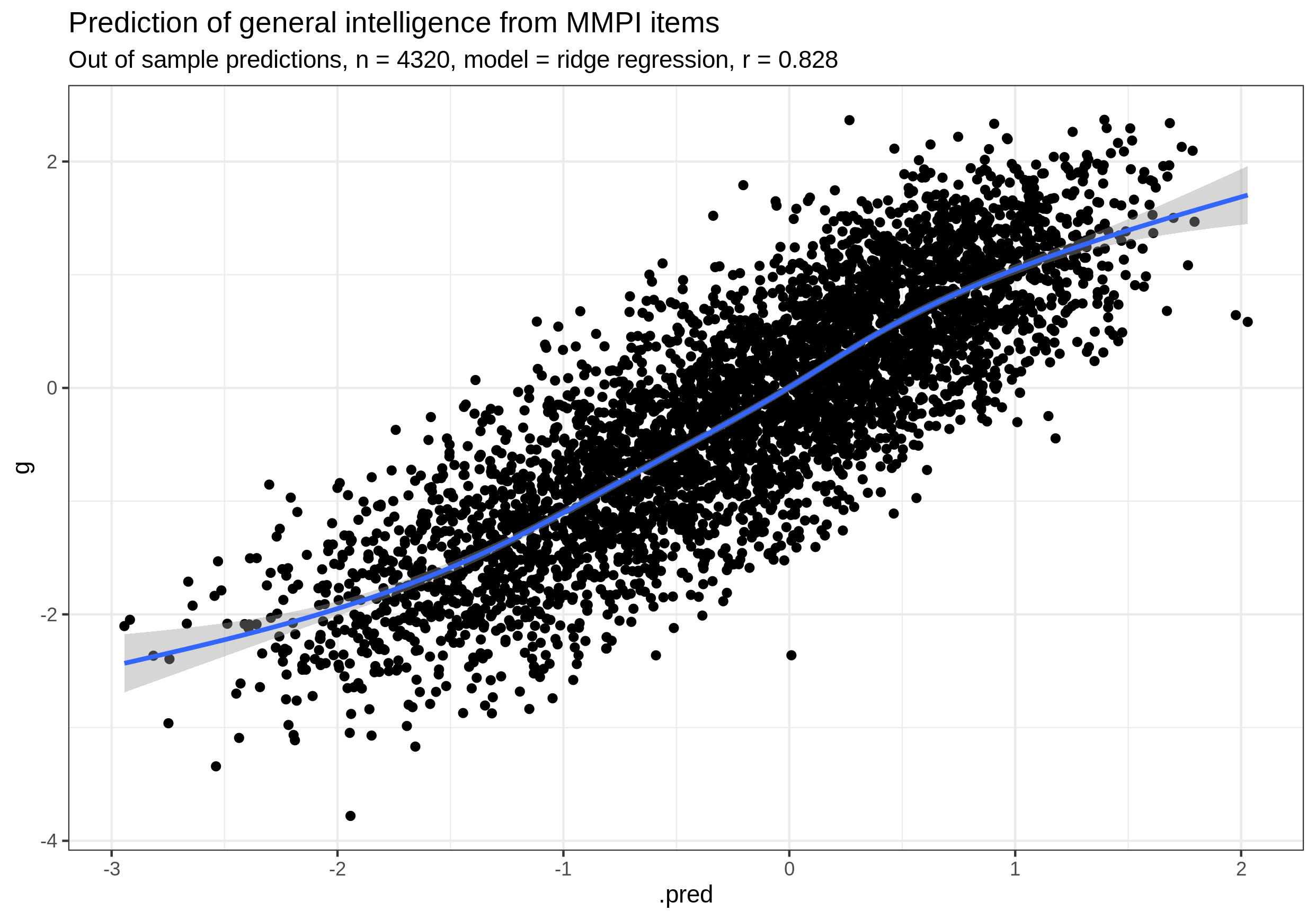

So, I gave it a shot too. Naturally, I made an R notebook and posted it on Rpubs.com (my 129th, turns out). I did some simple stuff, but one thing I was interested in was whether I could replicate the prior finding that MMPI models predict intelligence very well. The prior results was posted in this post, but will it work again with another workflow? Yep! With tidymodels, I get an out of sample correlation of .83 with intelligence. It’s surprisingly large, a testament to the power of the crud factor when combined with supervised learning.

I will note, though, that tidymodels has a learning curve. Some things that took me time to find out:

- It is not obvious how to figure out what the model function to call is called. I found that one has to go to this search page, and try some search queries. In my case, I wanted the multinomial logistic function (e.g. multinom() from nnet). So since I was already familiar with the logistic_reg() function from the tutorial, I thought it would be in that somewhere, but no. It has its own variant called multinom_reg().

- To use tune_grid(), one has to set the parameters first up in the model function such as linear_reg().