Science is broken

There are numerous errors with science as it is right now. Some of them are: closed access, publication bias, lack of data sharing, the horribly bad and slow publication system.

All these problems have done talked about before other places (e.g. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2051047). Here I wanna talk about solutions.

Closed access

This one is the most easy one to solve. Make papers (and books) openly available. Thousands of journals already do this, but it is a slow process. This is because the current journals make a lot of money by having their middle man position, so they of course refuse to change. Currently, books are not made openly available, at least legally. Huge pirate sites make millions of books available however. This trend will continue towards all books being openly available, legally or not. A copyright reform will hasten this change.

Publication bias

Positive results are usually more interesting and so both journals and authors tend to focus on writing/publishing them. This leads to a systematic bias in the literature which misleads everyone. The solution to this is to create structural incentives to publish exact (not conceptual) replications of other studies. Whatever barriers there are to this need to be removed.

One idea is to make a certain type of paper that is termed a replication. It needs to cite the paper it replicates, and a database about replications must be made, so that one can actually find the replications. Right now there is no easy way to sort thru all the papers that cite a particular paper to find those that actually tried to replicate it. There needs to be a category for this.

To create the incentive to replicate prior findings, publication indexes (e.g. H-index) need to reward authors of replications.

Data sharing

Hundreds of thousands of papers now exist which report statistical results of data. However, the data itself and the exact methods are rarely shared. This means that other researchers cannot rerun the data, or pool the data properly into a larger study (a true meta-analysis). Instead they have to rely on pooling pools of data (standard meta-analysis). There is no way to control for statistical errors authors might have made, or to detect possible data fraud.

Think about it this way. When school children hand it mathematics assignments, teachers require them to hand it all the calculations and often the data as well. But scientists don’t do this even tho this is grown ups and the stakes are infinitely higher. It makes no sense at all.

By default all data should be shared. The only exceptions are about confidential information, e.g. specific information about correct test items in a test (say, SAT), or names. Names can be anonymized easily.

Any science publisher should have a data bank where one can send in data. Given the costs of this nowadays, it is beyond stupid that is isn’t done.

Peer review

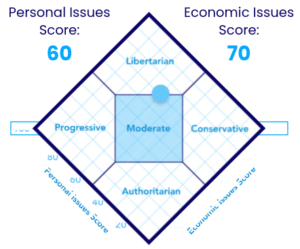

See the infograph here (http://emilkirkegaard.dk/en/?p=4095). There are various ways to move beyond normal PrePPR (pre-publication peer review) into PostPPR. The major problem is the enormous time that is wasted between first submission and the time when it is available. First it must get thru PrePPR and a number of revisions, and then it must wait for the next issue before other people can see it. Sometimes a paper needs to try a few different journals before it finally gets thru. This is crazy. It should be available to begin with, see the infograph above for another system.

The actual peer review is more tricky. There are various ways to do this. One might have a number of editors, who decide collectively which category (rejected, revision needed, accepted) papers belong in. This is close to the current system, and is what is depicted in the #2 system in the above link.

However, a true decentralized system is possible. Instead of having a small number of editors, one can make the class much larger. The most decentralized system is just having everybody who wants to rate on papers, and then aggregate those ratings in various ways. The raters themselves can publish have published papers, and one can thus weigh their ratings by that factor. Such a system would be entirely self-sufficient once it got off the ground (critical mass).

Besides the rating and categorization of papers above, the publication system merely needs to assign each submitted paper a DOI and a way to cite it:

Kirkegaard, Emil OW (2014). ”Radically open and decentralized science”. OPDESC, published 2014-02-05. doi:12.3456/opdesc.2014.02.0001

And register it in central systems (e.g. Google Scholar).