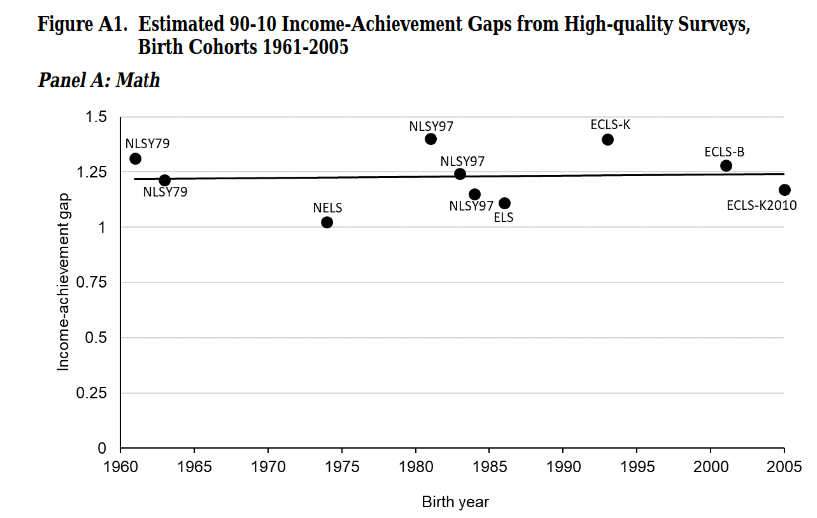

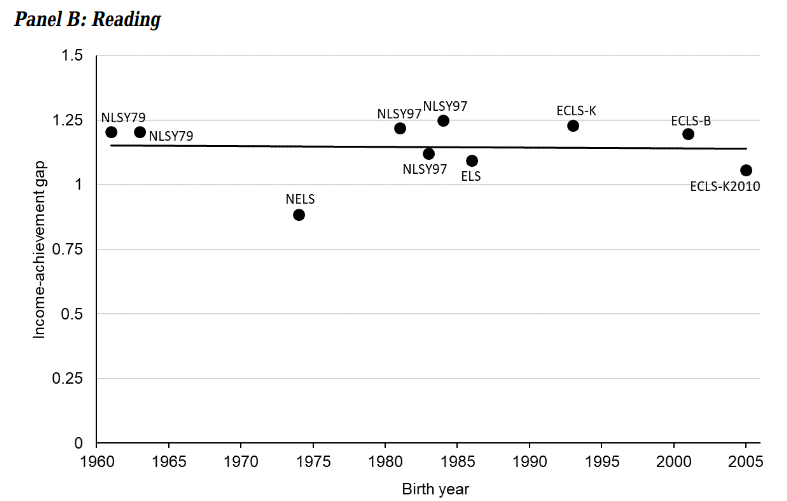

Some people think that human differences are just a matter of throwing enough money at the problem. Naturally, then, they always advocate for more educational spending whenever inconvenient gaps are such, such as those between kids of rich and poor kids:

-

Hanushek, E. A., Peterson, P. E., Talpey, L. M., & Woessmann, L. (2020). Long-Run Trends in the US SES-Achievement Gap (No. w26764). National Bureau of Economic Research.

Naturally, the authors of this study never mention the obvious genetic explanation for such stability.

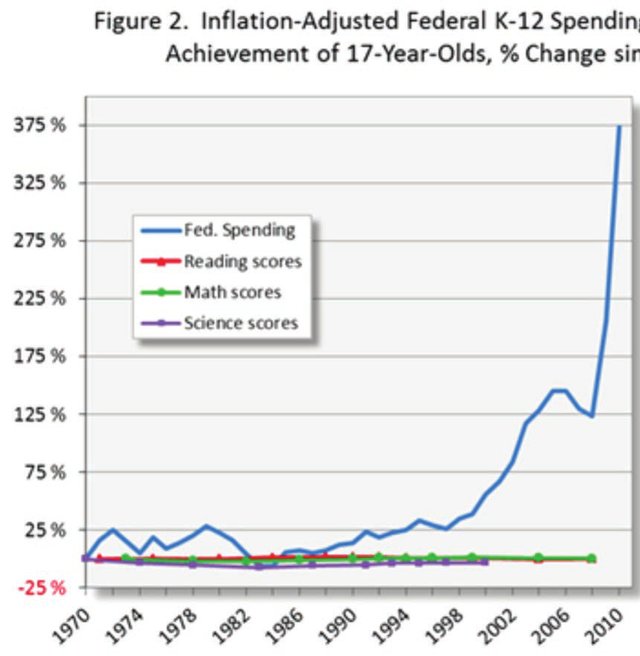

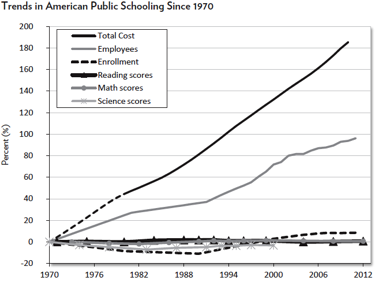

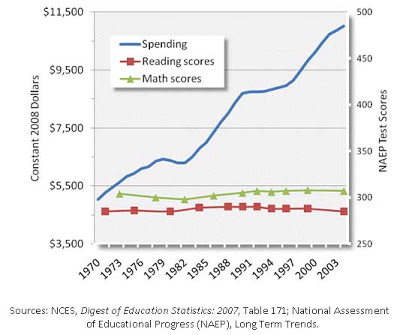

Another way to look at it is to look at changes in educational spending over time and test scores. There’s many such figures, three examples:

So, we increase spending (per student, inflation adjusted) by some multiple since 1970 or whenever, and then we observe no serious changes in tested performance. The obvious conclusion here is that money is not a limiting factor in performance on scholastic tests.

Since sociologists never give up on their core assumption of socially caused inequality, in the new century, the things they have been claiming are causal are things like books in the home, and then tablets, computers, internet access and what not. A causal observer might note that with the advent of the internet and Wikipedia, any interested person has a large fraction of human knowledge at their fingertips for free, and yet, we see no serious increases in tests of scientific of general knowledge. Thus, the causal observer will note that opportunity of educational resources wasn’t a serious limiting factor either.

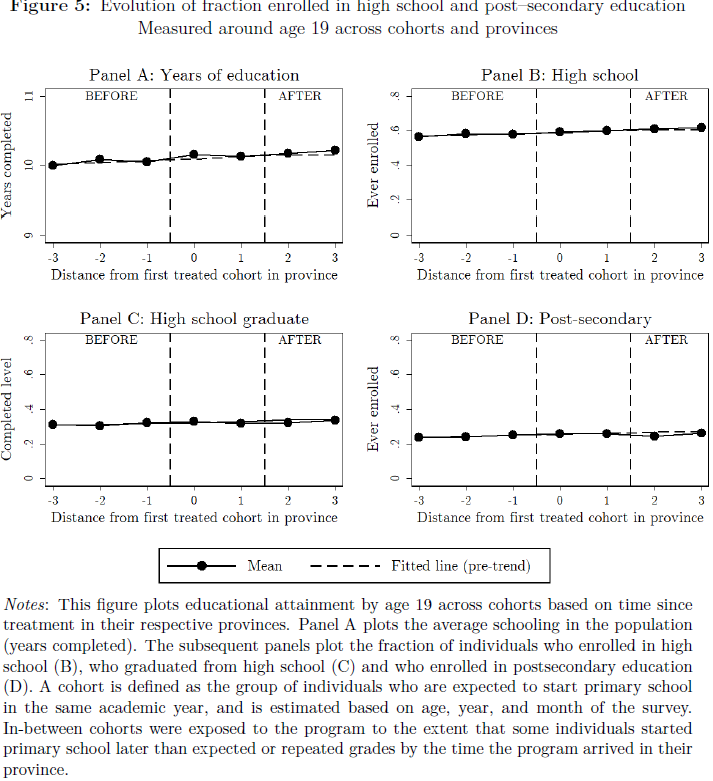

Alright, but what about something a bit more rigorous? OK, at random times, economists do stuff like this one:

-

Yanguas, M. L. (2020). Technology and educational choices: Evidence from a one-laptop-per-child program. Economics of Education Review, 76, 101984.

Skeptical scientist John Protzko notes:

What happens when you give literally every single child in a country a laptop? They use it for gaming, music, and it doesn’t improve educational attainment. Among those who attended college they are more likely to choose health fields over art.

Back in the early 2010s, there were various not so serious experiments with tablets in school in Denmark. Naturally, the media had speculations about how these would either improve or worsen things. There were some incompetent evaluations too. Nothing much happened of course. I doubt anyone will do a serious analysis of these school data, though one can do it with publicly available GPA averages by school and year.

Stay tuned next week when they announce another big spending rise on education! What will it be this time? Virtual reality for education? I mean, we tried online world Second Life already, so why not try again with VR. Naturally, you will be paying. Pay your tax with a smile!

For those who want something serious to put in the face of people who claim a new random intervention is plausible, and It Will Definitely Work This Time™, there’s large meta-analyses of these. Pretty much nothing works, and the better quality the evaluation, the worse it seems to work.

-

Fryer Jr, R. G. (2017). The production of human capital in developed countries: Evidence from 196 randomized field experiments. In Handbook of economic field experiments (Vol. 2, pp. 95-322). North-Holland.

-

Lortie-Forgues, H., & Inglis, M. (2019). Rigorous large-scale educational RCTs are often uninformative: Should we be concerned?. Educational Researcher, 48(3), 158-166.

Beware that authors of such analyses usually try to spin them in a positive light. You have to read the numbers and be struck by the sheer futility of the effort. Since most people are innumerate, there is no serious hope that It Will Be Better Next Year.