I stumbled upon another calibration test: http://calibratedprobabilityassessment.org/

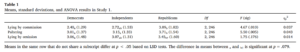

This one is almost entirely based on distances between US cities. I don’t even know where all the states are, so this involved a lot of guessing. But apparently, I’m quite a bit better at this than I thought:

The test is not quite good for multiple reasons:

- It’s almost entirely based on US cities, making it very US-centric. It would be better if it was based on world cities, say, the top 100 largest cities in the world. Yes, I’m aware that calibration tests are semi-independent of content knowledge, but they are not entirely independent.

- They don’t provide the data from the test. This could be interesting.

- They don’t provide any numerical output. The graph above is not so easy to interpret because it lacks e.g. confidence bands. By the looks of it, I was spot on for the 80-90% bands, but I think this one was based on only a few items as I didn’t select that category often.

- They seem to choose city pairs at random. Some of them end up being meaningless. I had one with the same city twice! And also had one without any question at all, simply had TRUE or FALSE.

In general, it appears I’m underconfident.