There are numerous intervention studies aim at improving cognitive traits. While one can aggregate these in ways that give the appearance of impact, when looked at properly they don’t really show any lasting gains (fade-out effect + publication bias + p-hacking). Every large planned analysis I’ve looked at found no lasting impact of any sort.

There can be several reasons for this. In one of my first published papers (2014), we forwarded the thesis that perhaps the gains were illusory to begin with in a sense. It turns out that when you look at intervention gains on a battery of cognitive tests (from the Head Start preschool program), the gains are larger on the less g-loaded tests. This suggests, but does not prove, that the gains were not in the g factor itself. The reason it does not prove this is that if there were true g gains as well as non-g gains, you would get the same negative correlation (Jensen’s method is only about relative changes, not absolute).

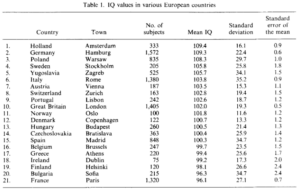

When you analyze cognitive batteries, what you usually find is that the tests don’t predict future outcomes equally. In fact, most of the validity is from the common variance, the g factor. This is what Arthur Jensen meant when he talked about g being the active ingredient in cognitive tests. This can be illustrated with data from the US military’s Project A form the 1990s:

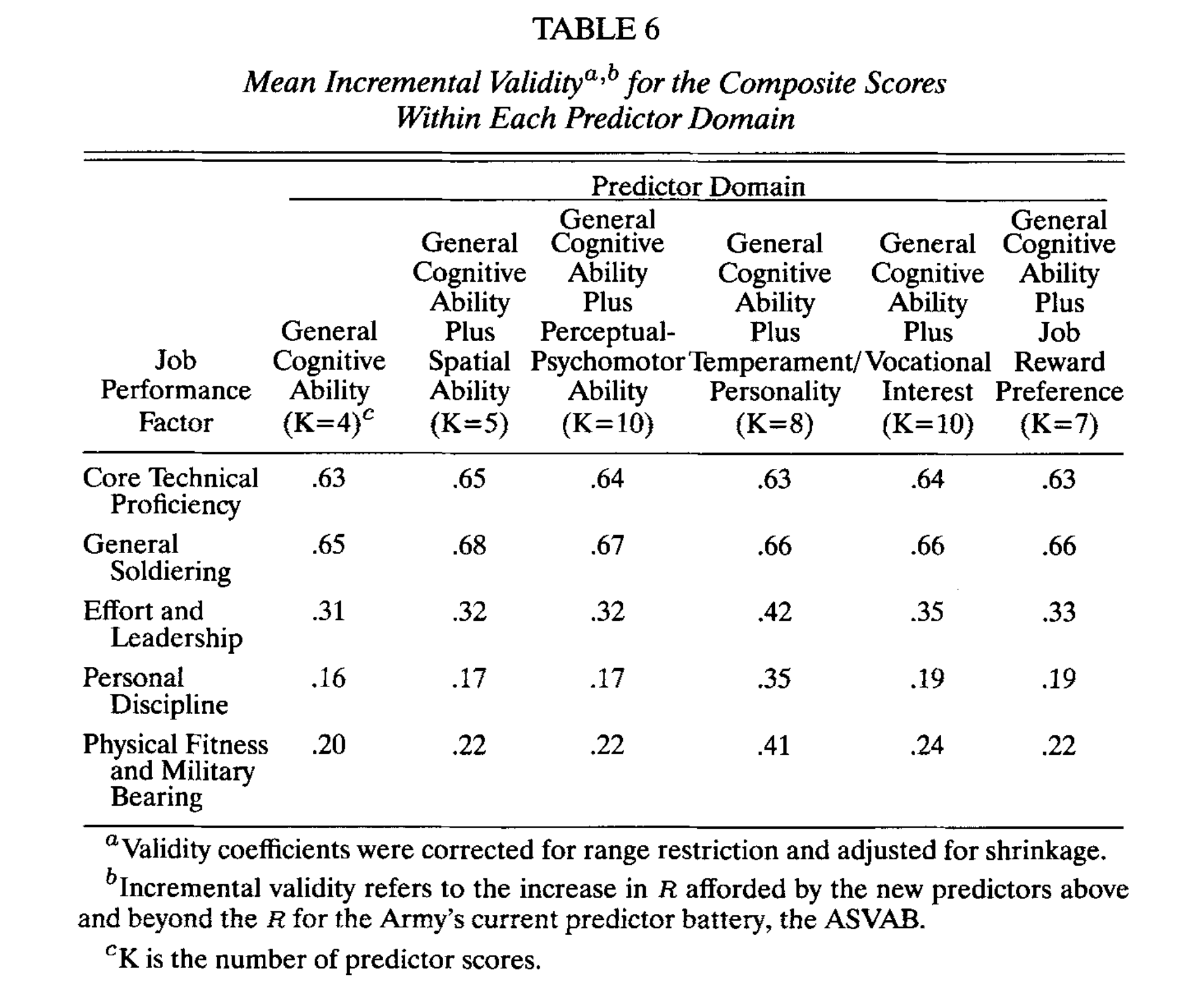

In this study tried a large number of additional cognitive tests grouped into various domains (spatial, perceptual etc.), and looked at the degree to which various military job performance measures could be predicted in advance (2 years after the cognitive testing). The first column shows g alone (the overall composite single value), and the other columns show g + [other ability]. The gains from adding additional non-g variables are pretty small, bordering on nonexistent. In other words, non-g abilities do not predict military job performance much, even using tests specifically designed to measure extra abilities not captured before thought to be relevant. These results come from a single large study of 1000s of military subjects, so there is no doubt one can throw on this based on meta-analytic correction methods (like the recent debate was about).

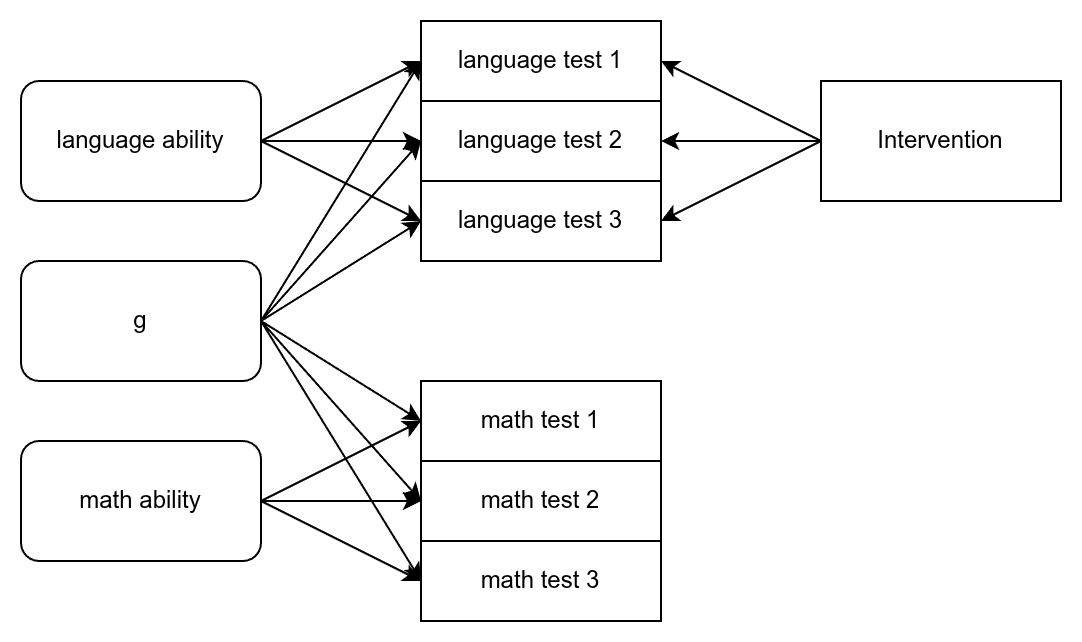

I want to spell out why this is important for interventions to improve cognitive skills. Interventions usually target one of more particular skills or domains. For instance, this recent early intervention study, that saw some discussion on X, aimed at language skills. This is a common target, usually based on some cognitive psychology theory about the importance of [whatever aspect of language ability]. We can draw the idea as a DAG:

In this case, we have 6 tests — 3 tests for math and 3 for language — that are caused by 3 abilities (latent). The underlying abilities are orthogonal (uncorrelated) in the bi-factor model, so language ability here means non-g language ability (degree of verbal tilt). The intervention is language training aimed at improving subjects’ language skills, say, vocabulary, listening, and reading. It is not expected or designed to improve subjects’ math ability hence no causal lines. This also means there is no far transfer (training on one type of test improving unrelated tests). Far transfer would suggest an increase in test scores that results from an increase in g itself since any g increase would affect any cognitive test given in proportion to their g-loadings.

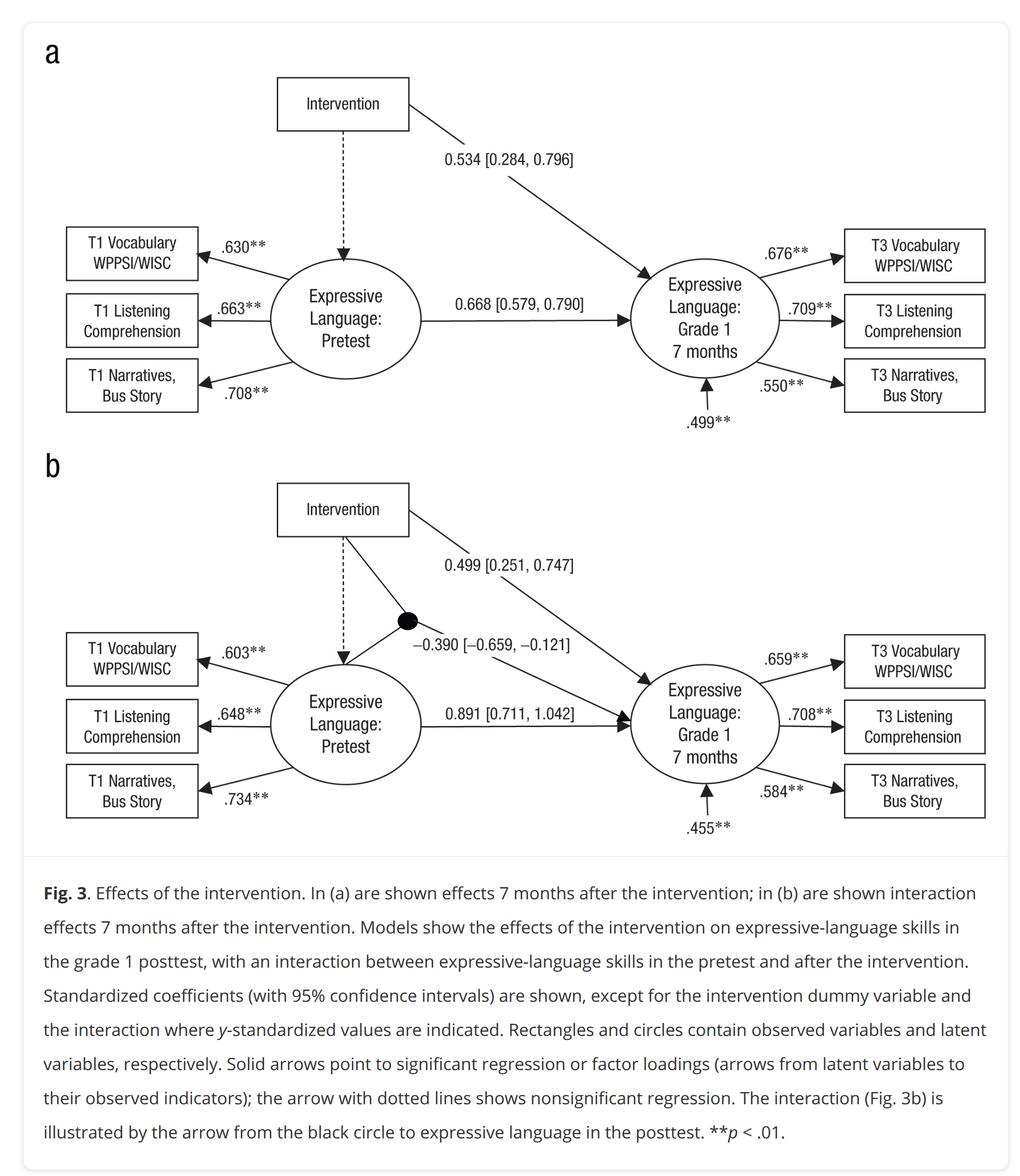

In this kind of scenario, usually moderate to large initial gains are seen in skills targeted. In the recent study, it looked like this:

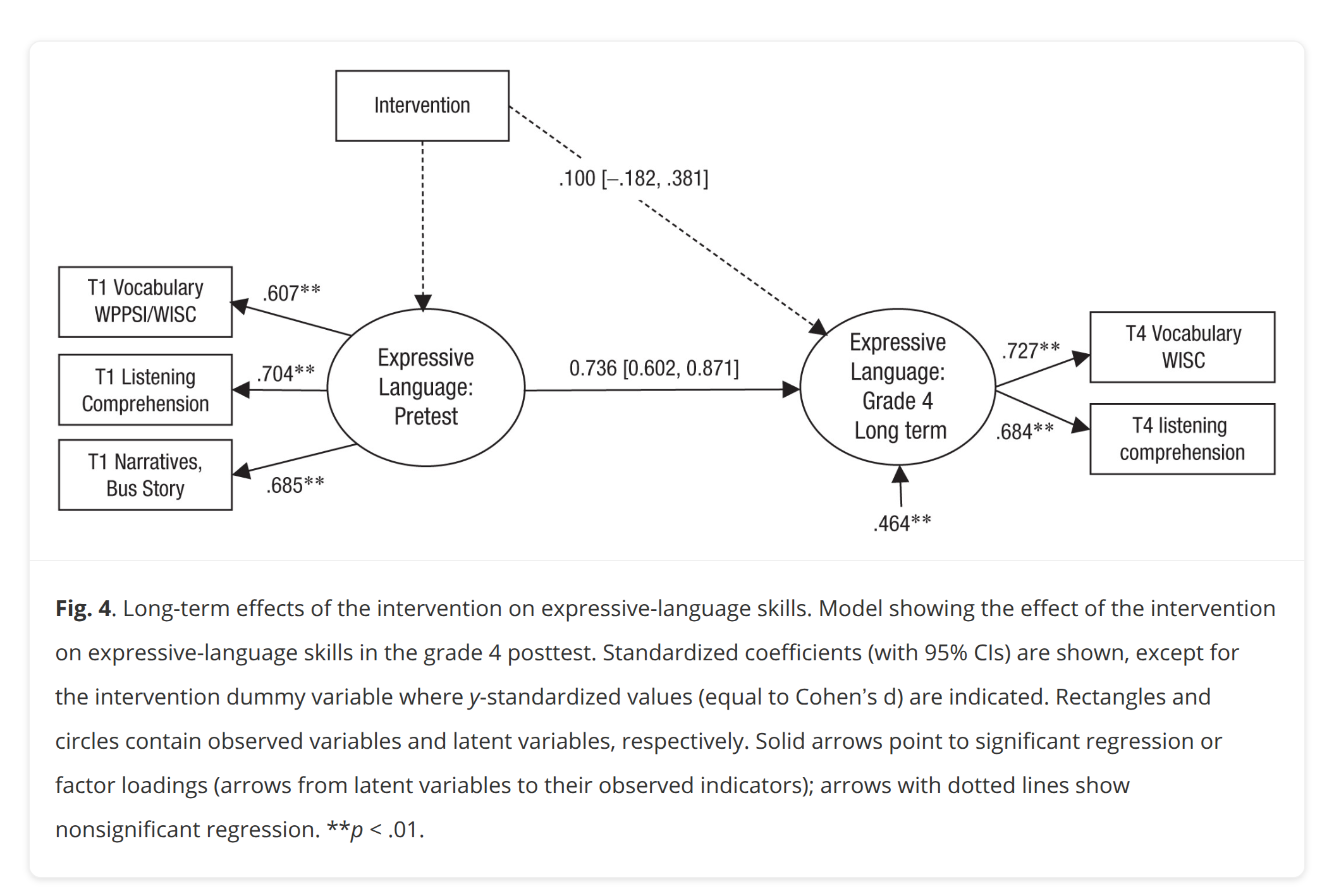

Thus after 7 months, there was an average gain of about 0.50 d on their language ability latent score. There was also an interaction such that subjects with initial weak skills gained relatively more (the interaction variable is being +1 in initial ability). However, after 4 years:

The residual gain is now 0.10 d, not beyond chance at this sample size. This was a pre-registered analysis, so it can probably be trusted despite the sample size of only 300.

Let’s ignore for a moment the issue that the gains are transient and assume subjects acquired a permanent gain in their language skills or general language ability (the latent variable in top). Would this help them later in life? Well, recall that the active ingredient in predicting the future is usually mostly limited to the general intelligence (g) validity, and not the other factors. As such, even permanent increases in some kind of broad non-g ability is not going to be very helpful. The focus on observed test scores mixes up these considerations. Even though an overall composite score is mostly due to g variation (at a given time), changes in such a composite score can be mostly due to non-g variation (temporal changes often have other causes than causes of simultaneous differences). Thus, if some intervention results in permanent increase in some composite overall score, say, because subjects retained some knowledge of history or solving a certain kind of puzzle, this score gain is not generally expected to actually improve most life outcomes because it is an improvement in the wrong skill / trait. Or to put it simpler. It doesn’t help making 2nd graders a bit better at reading for a few years, since the main reason reading ability predicts future life outcomes (jobs etc.) is that it is a good measure of g.

I think the main reason the gains fade-out is that the group factors (non-g broader abilities) represent specific knowledge or training on a certain kind of task. If you teach a 5-year old how to do 2nd grade math problems, this will boost the child’s test scores in math in the next few years. However, after their fellow students also acquire the ability to solve 2nd grade math problems, they are now on the same footing again, and the gains fade-out. Or more precisely, the other students catch up in that specific knowledge domain you added some knowledge to. The gains weren’t in some more general ability to do math (independently of g) but rather just how to solve particular problems that were similar to the ones you taught them. A lot of research finds that people do not generalize much from principles to not so closely related problems. This is why skills transfer doesn’t work very well in general. Students can in general be taught specific skills they can apply to a class of problems, but they won’t in general figure out how to apply the same skills to unfamiliar problems. No far transfer. For those interested in far transfer results, here’s some key meta-analyses and reviews (Giovanni Sala has done excellent work on this topic):

- Sala, G., Aksayli, N. D., Tatlidil, K. S., Tatsumi, T., Gondo, Y., & Gobet, F. (2019). Near and far transfer in cognitive training: A second-order meta-analysis. Collabra: Psychology, 5(1), 18.

- “Regarding practical implications, the obvious conclusion is that, to date, professional and educational curricula should focus on domain-specific knowledge rather than general and allegedly transferable skills.”

- Sala, G., & Gobet, F. (2017). Does far transfer exist? Negative evidence from chess, music, and working memory training. Current directions in psychological science, 26(6), 515-520.

- “For this reason, researchers andpolicymakers should seriously consider stopping spending resources for this type of research. Rather than searching for a way to improve overall domain-general cognitive ability, the field should focus on clarifying the domain-specific cognitive correlates underpinning expert performance.”

- Haier, R. J. (2014). Increased intelligence is a myth (so far). Frontiers in Systems Neuroscience, 8, 34.

- “In the future, there may be strong empirical rationales for spending large sums of money on cognitive training or other interventions aimed at improving specific mental abilities or school achievement (in addition to the compelling moral arguments to do so), but increasing general intelligence is quite difficult to demonstrate with current tests.”