This paper came out a while ago, but I neglected to comment on it.

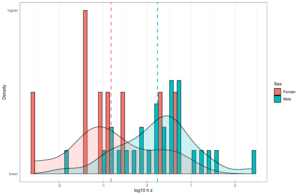

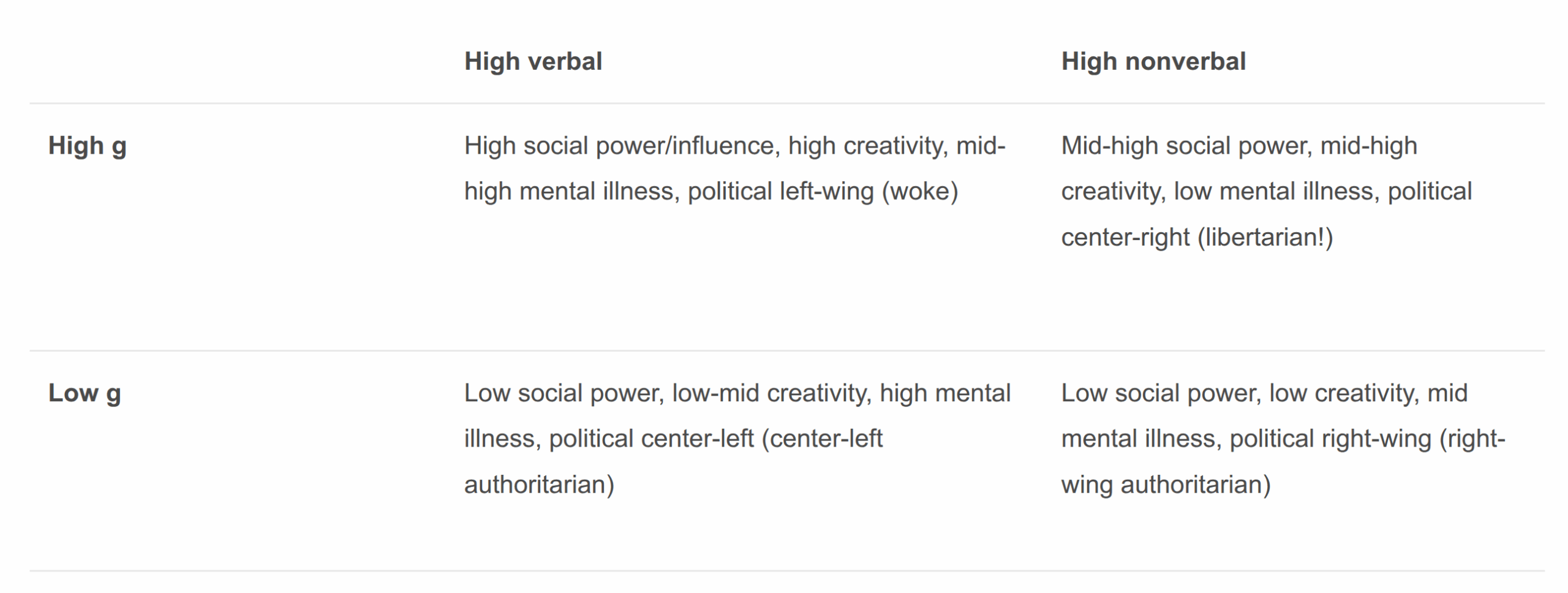

You may recall 5 years ago I forwarded the verbal tilt model of certain behavioral patterns across various social groups:

Later Roon and others renamed this pattern to wordcel in 2022 (a play on incel), which then got very popular. Popular enough that the usual verbal tilt journalists started writing ‘explainer‘ pieces on it. Of course, other researchers had been writing about this before me as well, Ludeke et al stands out (2017, 2018).

The Minnesota crew decided to test the verbal tilt model on political behaviors and various sociopolitical views in their dataset:

- Edwards, T., Dawes, C. T., Willoughby, E. A., McGue, M., & Lee, J. J. (2025). More than g: Verbal and performance IQ as predictors of socio-political attitudes. Intelligence, 108, 101876.

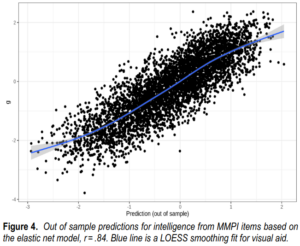

Measures of intelligence predict socio-political attitudes and behaviors, such as liberalism, religiosity, and voter turnout. Little, however, is known about which cognitive abilities are responsible for these relationships. Employing several cohorts from the Minnesota Center for Twin and Family Research, we test the predictive performance of different broad abilities. Using multiple regression to compare verbal and performance IQ from Wechsler intelligence tests, we find verbal IQ more strongly predicts voter turnout, civic engagement, traditionalism, and measures of ideology. On average, the correlation between verbal IQ and our socio-political attitudes is twice as large as that of performance IQ. The same pattern appears after controlling for education and after performing the analysis within sibling pairs. This implies that the relationship cannot be entirely mediated through education, nor entirely confounded by upbringing. Positive and negative controls are employed to test the validity of our methodology. Importantly, we find verbal and performance IQ to be equally predictive of the ICAR-16, a distinct measure of general intelligence. The results imply that variation in cognitive abilities, which are orthogonal to general intelligence, influence socio-political attitudes and behaviors. The role of verbal ability in influencing attitudes may help to explain the ideological leanings of specific occupations. Its association with turnout and civic engagement suggests that those with a verbal tilt may have greater influence over politics and society.

Their study had 4 of the Wechsler tests, so that one can calculate fairly reliable tilt scores, or less directly, scores for verbal and non-verbal tests:

For brevity only the Vocabulary, Information, Picture Arrangement, and Block Design subtests were administered. The factor loadings calculated by Gignac (2005) imply that the loading of Vocabulary and Information (“verbal IQ”) on g is .84, whereas that of Picture Arrangement and Block Design (“performance IQ”) on g is .76.Thus, our measures of verbal and performance IQ should be about equally good as indicators of g. Similarly, the Gignac factor loadings imply that the loading of verbal IQ on its group factor of verbal ability is .44, whereas that of performance IQ on its group factor is .31. Note that the observed correlation between verbal and performance IQ in our sample (.47) fell short of the .84 × .76 = .64 implied by the Gignac calculations based on the WAIS-R standardization data. Nevertheless, we continue to be confident that verbal and performance IQ were about equally g loaded in our sample because each was about equally correlated with head circumference within families (Leeet al., 2019)

Their results actually show that the verbal tests have a stronger g-loading and in multiple regression their verbal composite score should tend to suck up the validity from the slightly less g-loaded non-verbal composite.

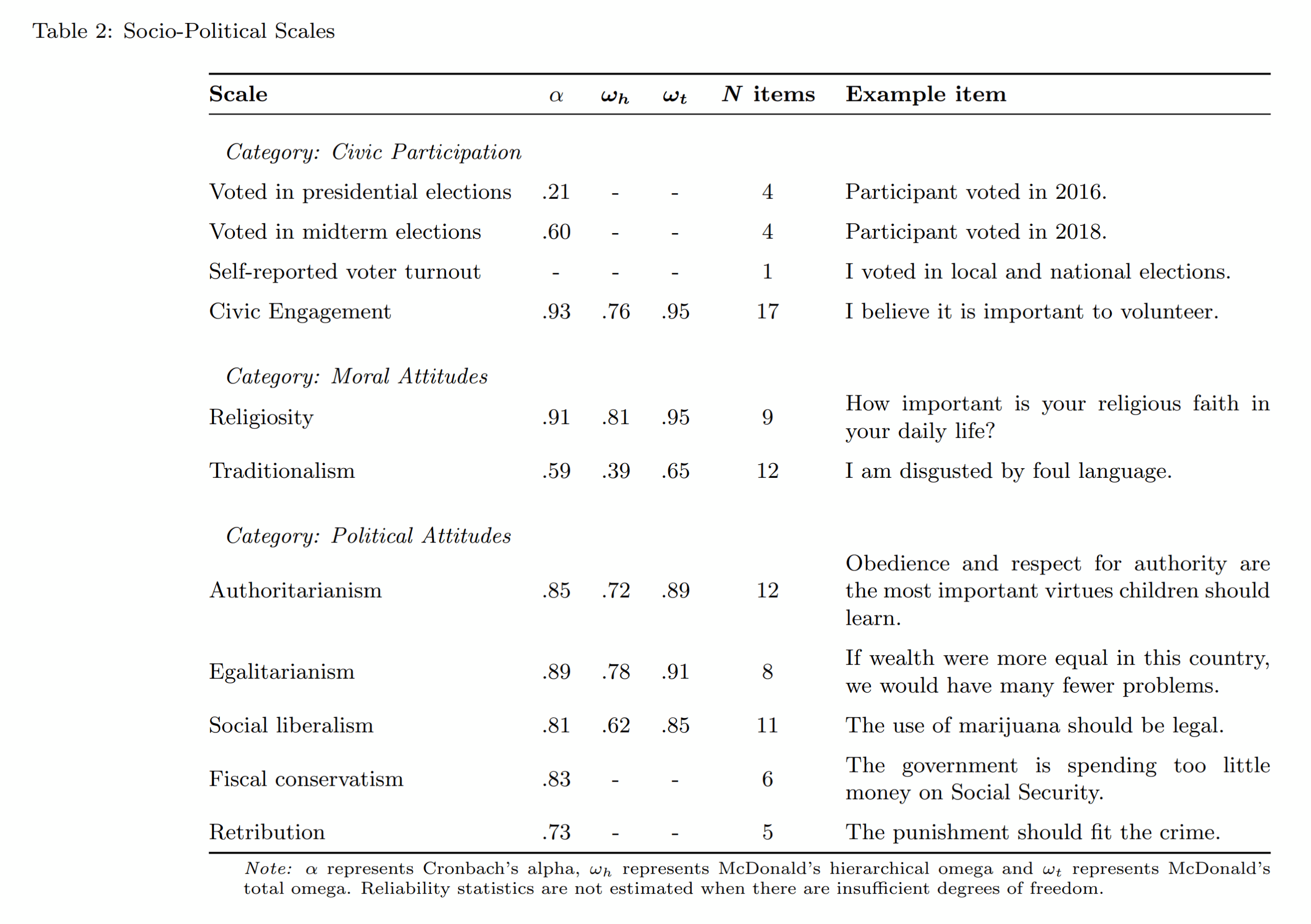

They had in addition a number of surveys about opinions and behaviors:

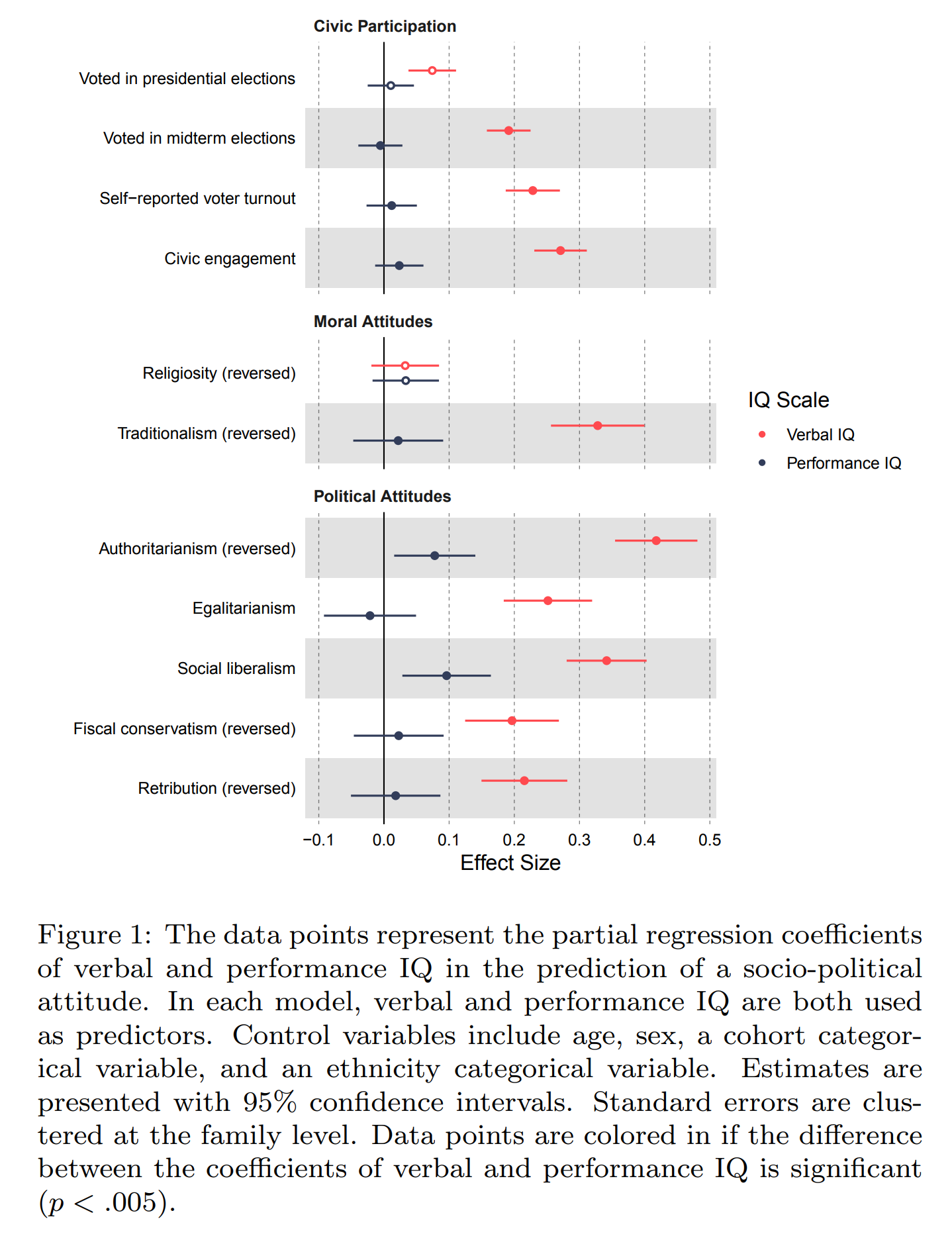

Their results for sociopolitical opinions and behaviors:

Their results basically confirm the verbal tilt model in that verbal performance, controlling for non-verbal performance, predicts opinions and behaviors, while non-verbal tilt in itself doesn’t predict much of anything. Their findings suggest that verbal tilt predicts:

- Political participation, that is, voting, and thinking it is important to vote.

- Non- or anti-traditionalism, but not religiousness. Religiousness tends to be verbal tilt too, but my guess here is that there are opposite effects since anti-traditionalism causes non-religiousness but verbal tilt also causes religiousness, and the net effect was near 0 in this sample.

- All manners of left-wing views. Beware the authoritarianism measure here which is a RW-flavored version.

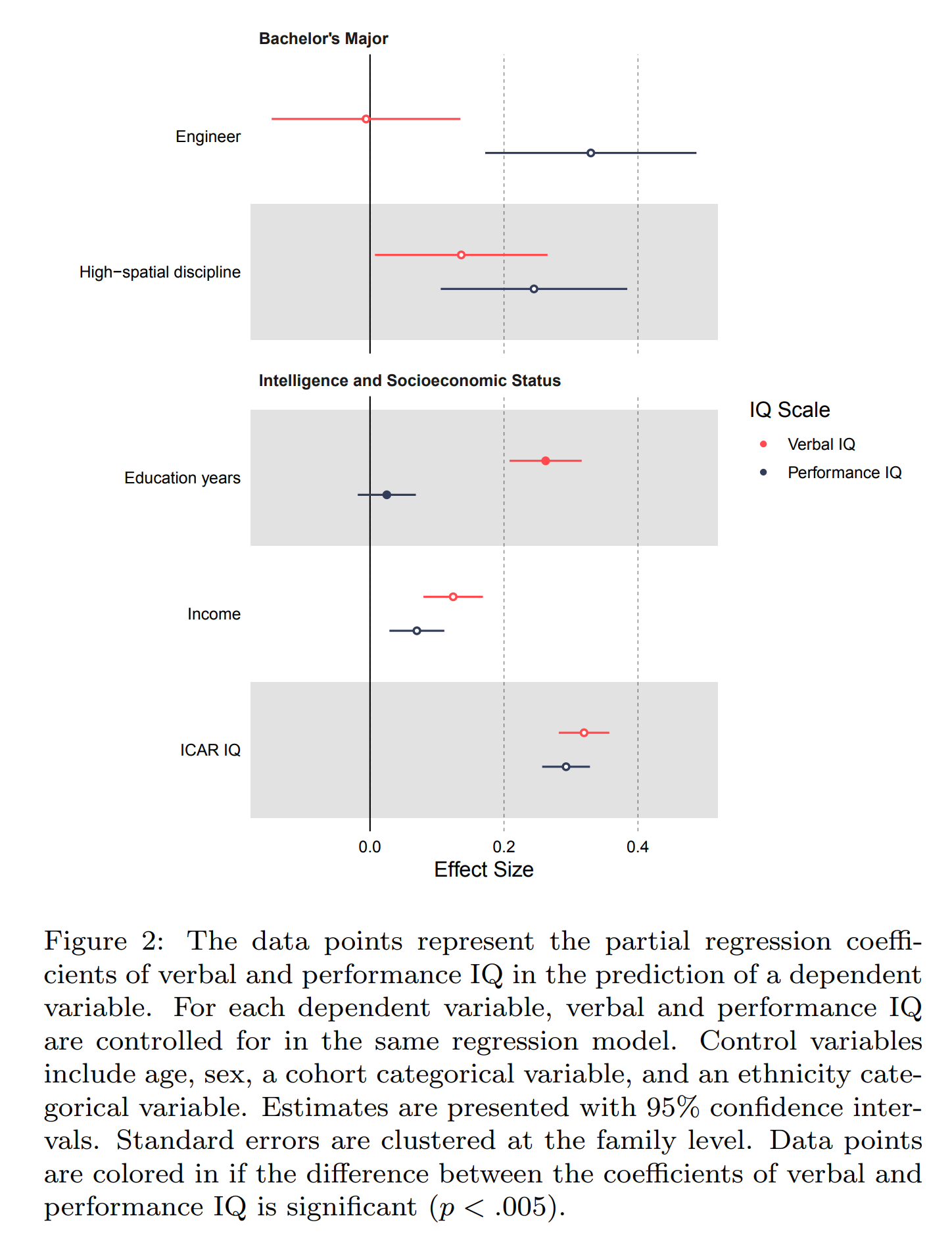

So things look about right but there’s nagging doubt about the g-loading confounding. They tested this by looking for outcomes that definitely should correlate more with one than the other, or which shouldn’t:

In their positive test for non-verbal tilt, they show that being an engineer is predicted better by non-verbal ability, which is reassuring, but engineers also have very large tilts, so this isn’t a great test. Their more general test of working in a high-spatial job was inconclusive. They show that the ICAR16 scores (ICAR16 is 16 items of 4 types, verbal reasoning, spatial rotation, number series, and matrices) were about equally predicted by verbal and non-verbal scores. However, since ICAR16 is mostly non-verbal, this isn’t as great evidence as they think it is. In fact, the ICAR16 items for the ‘verbal reasoning’ scale (4 items) concern math (2 questions are mathematical word problems, not really a word test), not word meanings. Thus, the mostly non-verbal nature of ICAR16 should make it more predictable from non-verbal tests holding g-loading constant. This evidence is not that compelling.

Why didn’t they just use a better modeling approach than multiple regression?:

To identify the exact effects of different group factors, it will be necessary to use more measures of intelligence.With many items or subtests, it will be possible to identify multiple factors with confirmatory factor analysis and attempt to estimate their effects on socio-political attitudes in a path model. We find verbal subtests perform similarly to each other and the same for performance subtests. For two political attitudes, Vocabulary has a larger effect size than Information. This hints at the possible relevance of narrower distinctions between cognitive abilities to sociopolitical attitudes.

It would appear they only have the test-level scores for their 4 tests, but not items. If they had item data, it is possible to apply proper bi-factor item response theory here to obtain the tilts. They could alternatively also just regress out their g scores from their 4 tests and use them as residualized predictors along with g. Or just fit PCA on their 4 tests and use dimension 2 as the tilt (which is what the Danish study did).

With regards to causality, the findings above are merely cross-sectional and thus could just mean that verbal tilt correlates with something else that causes all of these patterns. I think verbal tilt forms a sort of cluster or profile of various related psychological patterns, as depicted in my summary table in the beginning of the post. Mental health issues — a correlate of verbal tilt — for instance, also predict left-wing political views, but I don’t know if this correlation is strong enough it might be a large confounder here. It is hard to find a dataset that has all the necessary variables measured with sufficient precision.

So instead they tested whether their findings in general replicate in more stringent sub-analyses. They tried:

- Controlling for years of education: results were fine.

- Family fixed effects (sibling control): results suggested the same pattern but noisier.

- Family fixed effects and years of education: very noisy but same general pattern and the stronger findings replicated.

- Family fixed effects with MZ twins: p values were now a bunch of p = 2% stuff, but the pattern remained.

I guess their (2) also includes pairs of MZs, and not just DZ/sib, which makes it an unusually strong control. Overall, though, results suggest causality or at least a very close correlate of something causal.

One interesting supplementary analysis concerns using all 4 tests directly:

Here we see that it is mainly vocabulary that is doing the heavy lifting.

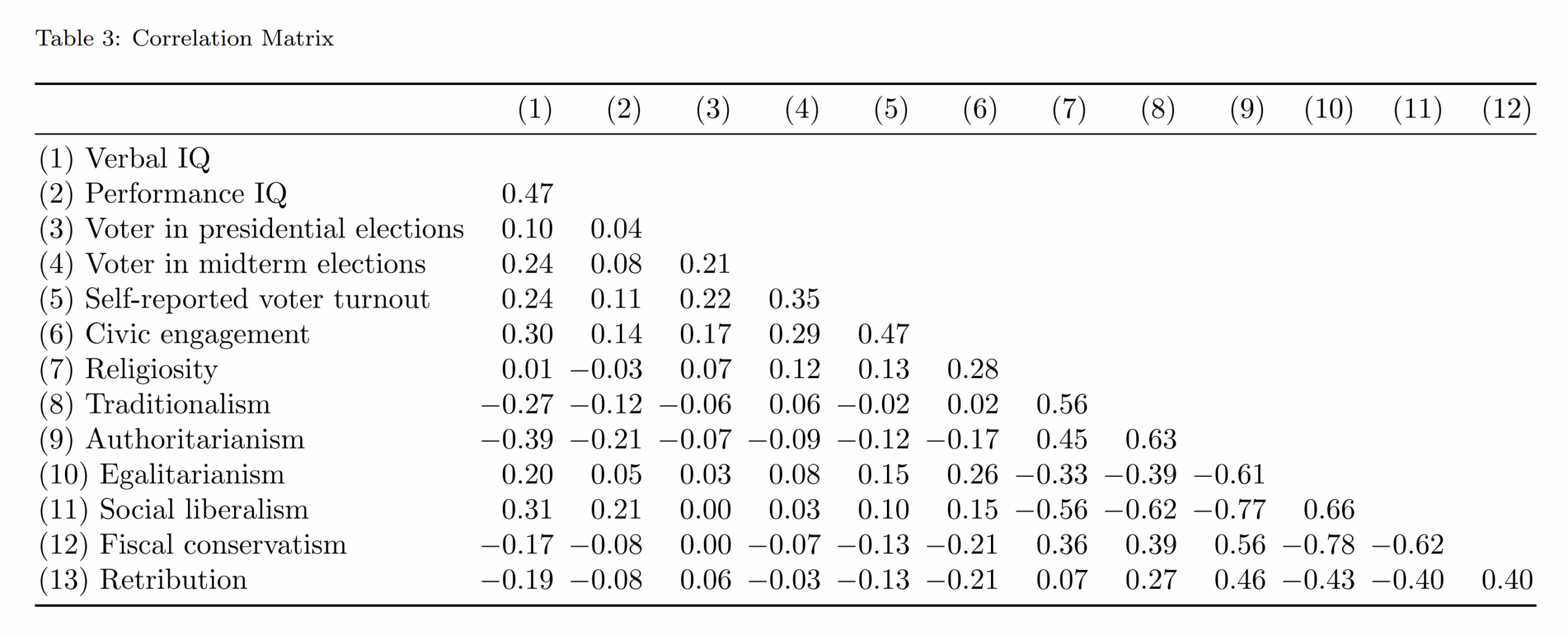

Unfortunately, the dataset is not provided, so I can’t do any more analyses so easily, but they did post the correlation matrix:

Unfortunately, it doesn’t have the test-level correlations, so I can’t compute the g-loadings in their sample and check if these predict the magnitude of test validities in their plot above. For some reason they don’t report these values, but only those from Gignac’s meta-analysis.

So some doubts remain, but certainly the expected verbal tilt patterns were mostly found, even using sibling controls.

On the personal side

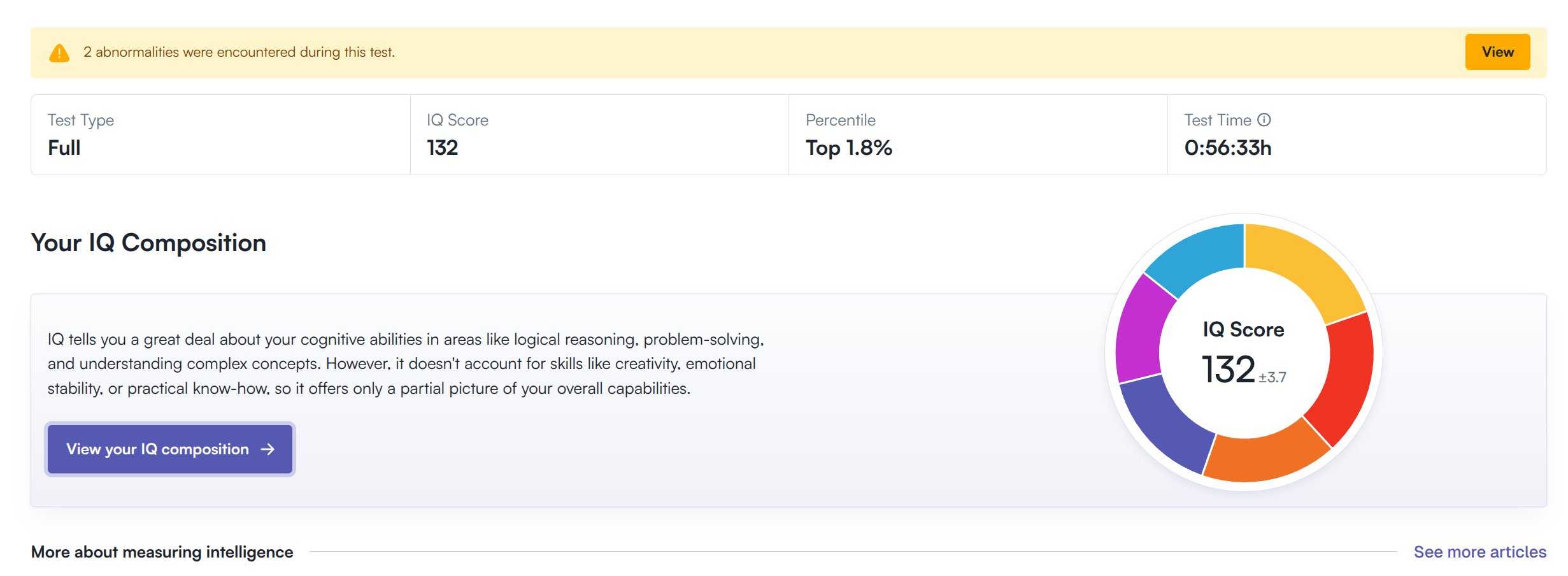

I know you are wondering. Some months ago, Russell Warne and friends launched a new online testing company, Riot IQ. They provided me with a free full testing, which I did. Here’s my overall results:

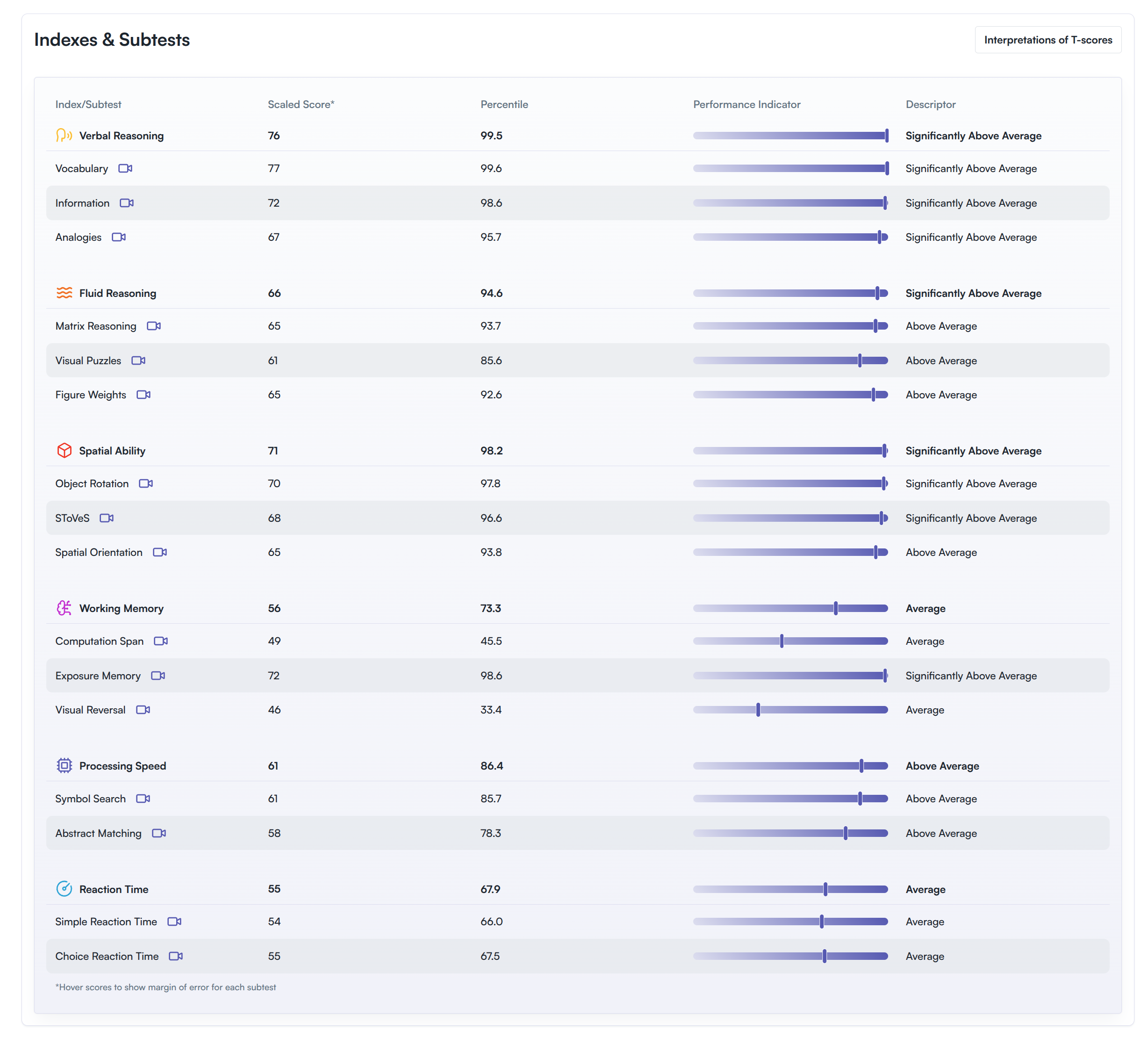

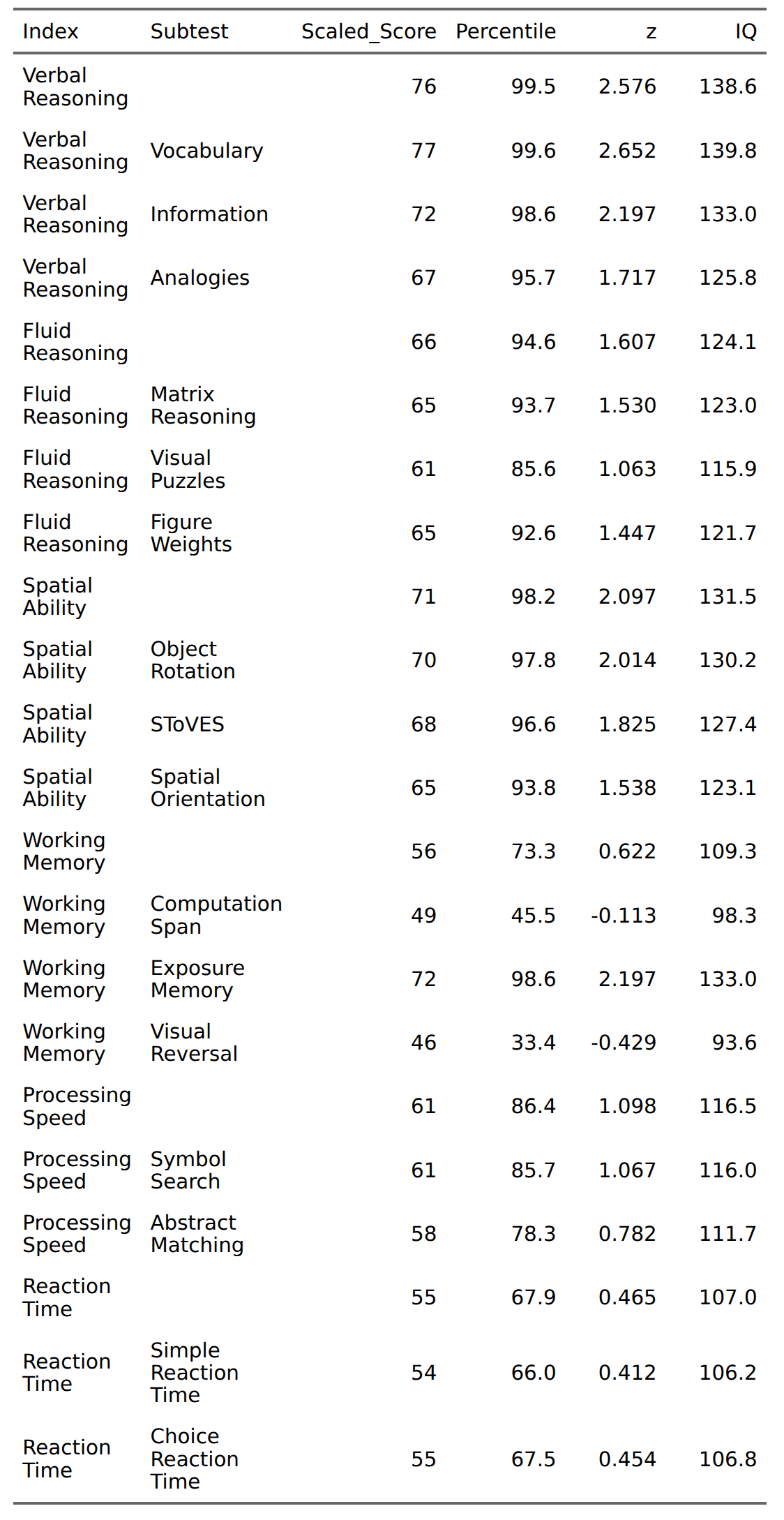

And the individual results:

T scores are an intermediate score psychologists sometimes use to make age-comparisons (mean 50 SD 10). We can however just convert their centiles to IQs, assuming they did age-corrections already:

Overall, I am not surprised by the verbal + spatial tilt. I don’t know how I managed to get literally below average results on visual reversal, but it is true that some of their tests are very annoying and on a short timer. Maybe it was one of them. I am a bit surprised by the matrix reasoning result, which is ~10 IQ lower than my results on the various online tests. Could be a norming issue with them, or maybe bad luck on this one. You can get a preview of all of their 15 tests here, with examples. To note, since I am a non-native speaker, these results are a bit too low, but I don’t know what the correction would be. And somehow the most biased test, vocabulary, was my strongest result.

Anyway, I recommend trying the Riot IQ test battery for yourself. It’s paid (25 USD) and I don’t get anything for recommending it. Post results in the comments.