There’s a few studies on this already. This is a rare event/person situation, so sampling error is large and problematic (cf. discussion in esports paper). Ignoring this, we can draw some general conclusions by a quick literature survey.

-

Oransky, I. (2018). Volunteer watchdogs pushed a small country up the rankings. Science.

Based on data from RetractionWatch database. This is somewhat wrong approach because a lot of fraud committed in Western countries is done by foreign researchers. One needs to do the analyses by name and inferring the ancestry of the persons by building a machine learning model off data from e.g. behindthename.com (see my prior study on first names). As I noted on Twitter, in Denmark, most famous faker is Milena Penkowa. That doesn’t sound very Danish, she is actually half Bulgarian.

If we look at the top list of fraudsters in RW database:

| Name | Retractions | Ancestry | European | East Asian | South Asian | Other |

| Yoshitaka Fujii | 183 | Japanese | 1 | |||

| Joachim Boldt | 97 | German | 1 | |||

| Yoshihiro Sato | 84 | Japanese | 1 | |||

| Jun Iwamoto | 64 | Japanese | 1 | |||

| Diederik Stapel | 58 | Dutch | 1 | |||

| Yuhji Saitoh | 53 | Japanese | 1 | |||

| Adrian Maxim | 48 | European | 1 | |||

| Chen-Yuan Peter Chen | 43 | Chinese | 1 | |||

| Fazlul Sarkar | 41 | Bengali | 1 | |||

| Hua Zhong | 41 | Chinese | 1 | |||

| Shigeaki Kato | 40 | Japanese | 1 | |||

| James Hunton | 37 | European | 1 | |||

| Hyung-In Moon | 35 | Korean | 1 | |||

| Naoki Mori | 32 | Japanese | 1 | |||

| Jan Hendrik Schön | 32 | German | 1 | |||

| Soon-Gi Shin | 30 | Korean | 1 | |||

| Tao Liu | 29 | Chinese | 1 | |||

| Bharat Aggarwal | 28 | Indian | 1 | |||

| Cheng-Wu Chen | 28 | Chinese | 1 | |||

| A Salar Elahi | 27 | Persian | 1 | |||

| Ali Nazari | 27 | Persian | 1 | |||

| Richard L E Barnett | 26 | European | 1 | |||

| Antonio Orlandi | 26 | Italian | 1 | |||

| Prashant K Sharma | 26 | Indian | 1 | |||

| Rashmi Madhuri | 24 | Indian | 1 | |||

| Scott Reuben | 24 | European | 1 | |||

| Shahaboddin Shamshirband | 24 | Malaysian | 1 | |||

| Thomas M Rosica | 23 | European | 1 | |||

| Alfredo Fusco | 22 | Italian | 1 | |||

| M Ghoranneviss | 22 | Persian | 1 | |||

| Anil K Jaiswal | 22 | Indian | 1 | |||

| Gilson Khang | 22 | Korean | 1 | |||

| Sum | 10 | 13 | 5 | 4 |

I manually coded these by googling them, and if not helpful, then relying on names. I don’t know any of ancestry/name based count of scientific productivity, but if we use the Nature index that Anatoly Karlin wrote about recently, and do a crude count (meaning I allocate Euro-dominant countries entirely to European, including Brazil and Israel):

| Country | FC12 | FC13 | FC14 | FC15 | FC16 | FC17 | FC18 | Mean |

| USA | 37.20 | 36.50 | 34.90 | 35.00 | 34.60 | 34.10 | 32.80 | 35.01 |

| China | 8.90 | 10.20 | 12.00 | 12.90 | 14.00 | 15.80 | 18.40 | 13.17 |

| Germany | 8.00 | 8.00 | 7.90 | 7.80 | 7.80 | 7.60 | 7.40 | 7.79 |

| UK | 6.40 | 6.40 | 6.30 | 6.50 | 6.60 | 6.30 | 6.10 | 6.37 |

| Japan | 6.80 | 6.60 | 6.20 | 5.70 | 5.50 | 5.30 | 5.00 | 5.87 |

| France | 4.60 | 4.40 | 4.30 | 4.10 | 4.00 | 3.80 | 3.60 | 4.11 |

| Canada | 3.00 | 2.90 | 2.90 | 3.00 | 2.70 | 2.70 | 2.60 | 2.83 |

| Switzerland | 2.30 | 2.30 | 2.50 | 2.30 | 2.30 | 2.30 | 2.30 | 2.33 |

| Korea | 2.30 | 2.30 | 2.30 | 2.40 | 2.30 | 2.20 | 2.20 | 2.29 |

| Spain | 2.40 | 2.30 | 2.10 | 2.00 | 2.10 | 1.90 | 1.90 | 2.10 |

| Australia | 1.70 | 1.80 | 1.90 | 2.00 | 2.00 | 1.80 | 2.00 | 1.89 |

| Italy | 2.10 | 2.10 | 2.00 | 2.00 | 1.80 | 1.80 | 1.70 | 1.93 |

| India | 1.50 | 1.70 | 1.80 | 1.60 | 1.60 | 1.70 | 1.60 | 1.64 |

| Netherlands | 1.50 | 1.50 | 1.50 | 1.50 | 1.60 | 1.60 | 1.50 | 1.53 |

| Singapore | 0.90 | 0.90 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 0.97 |

| Sweden | 0.90 | 1.00 | 1.00 | 1.10 | 1.00 | 1.00 | 1.00 | 1.00 |

| Israel | 1.00 | 0.90 | 1.00 | 1.00 | 1.00 | 1.00 | 1.00 | 0.99 |

| Taiwan | 1.20 | 1.10 | 0.90 | 0.80 | 0.80 | 0.70 | 0.60 | 0.87 |

| Russia | 0.60 | 0.70 | 0.70 | 0.70 | 0.70 | 0.70 | 0.70 | 0.69 |

| Belgium | 0.70 | 0.60 | 0.70 | 0.70 | 0.80 | 0.70 | 0.70 | 0.70 |

| Austria | 0.50 | 0.50 | 0.60 | 0.50 | 0.60 | 0.60 | 0.60 | 0.56 |

| Denmark | 0.60 | 0.60 | 0.60 | 0.60 | 0.70 | 0.60 | 0.70 | 0.63 |

| Brazil | 0.40 | 0.50 | 0.50 | 0.40 | 0.40 | 0.40 | 0.50 | 0.44 |

| Poland | 0.40 | 0.40 | 0.40 | 0.40 | 0.40 | 0.40 | 0.40 | 0.40 |

| Czechia | 0.20 | 0.20 | 0.20 | 0.30 | 0.30 | 0.30 | 0.30 | 0.26 |

| Sum | 96.10 | 96.40 | 96.20 | 96.30 | 96.60 | 96.30 | 96.60 | 96.36 |

| European | East Asian | South Asian | ||||||

| 71.54 | 23.17 | 1.64 |

Europeans produce ~72% of ‘good science’ in 2012-2018, East Asians ~23% and South Asians ~2%, and there is a remainder category of ~4%. Relative to the top list, Europeans produce more science than top science cheaters, and vice versa for the other groups.

-

Ataie-Ashtiani, B. (2018). World map of scientific misconduct. Science and engineering ethics, 24(5), 1653-1656.

A comparative world map of scientific misconduct reveals that countries with the most rapid growth in scientific publications also have the highest retraction rate. To avoid polluting the scientific record further, these nations must urgently commit to enforcing research integrity among their academic communities.

They give us a nice table, and I did the same kind of crude calculations (grouping Latin Americans as Europeans, under assumptions people who do science there are European ancestry mostly):

| Country | Publications | Misconducts | Ratio | Ratio of European |

| China | 2741274 | 4353 | 0.001588 | 67.66 |

| Malaysia | 157198 | 50 | 0.000318 | 13.55 |

| Mexico | 121193 | 31 | 0.000256 | 10.90 |

| Taiwan | 252497 | 46 | 0.000182 | 7.76 |

| Pakistan | 71350 | 10 | 0.000140 | 5.97 |

| Iran | 271403 | 38 | 0.000140 | 5.96 |

| Saudi Arabia | 97886 | 8 | 0.000082 | 3.48 |

| Hong Kong | 100036 | 8 | 0.000080 | 3.41 |

| South Korea | 465211 | 32 | 0.000069 | 2.93 |

| Egypt | 92328 | 6 | 0.000065 | 2.77 |

| India | 747844 | 39 | 0.000052 | 2.22 |

| Singapore | 117089 | 6 | 0.000051 | 2.18 |

| Thailand | 78124 | 4 | 0.000051 | 2.18 |

| Australia | 529779 | 19 | 0.000036 | 1.53 |

| Netherlands | 343352 | 12 | 0.000035 | 1.49 |

| Romania | 87280 | 3 | 0.000034 | 1.46 |

| Japan | 787157 | 27 | 0.000034 | 1.46 |

| Canada | 606562 | 20 | 0.000033 | 1.40 |

| Italy | 624340 | 18 | 0.000029 | 1.23 |

| Greece | 114300 | 3 | 0.000026 | 1.12 |

| United Kingdom | 1145434 | 30 | 0.000026 | 1.12 |

| Ireland | 79950 | 2 | 0.000025 | 1.07 |

| Germany | 1010967 | 25 | 0.000025 | 1.05 |

| Czech Republic | 130262 | 3 | 0.000023 | 0.98 |

| United States | 3876791 | 88 | 0.000023 | 0.97 |

| Portugal | 134433 | 3 | 0.000022 | 0.95 |

| Austria | 142689 | 3 | 0.000021 | 0.90 |

| Poland | 238095 | 5 | 0.000021 | 0.89 |

| Belgium | 192437 | 4 | 0.000021 | 0.89 |

| Turkey | 246018 | 5 | 0.000020 | 0.87 |

| Sweden | 227239 | 4 | 0.000018 | 0.75 |

| France | 712371 | 10 | 0.000014 | 0.60 |

| Argentina | 77402 | 1 | 0.000013 | 0.55 |

| Russian Federation | 340791 | 4 | 0.000012 | 0.50 |

| New Zealand | 87919 | 1 | 0.000011 | 0.48 |

| Spain | 526613 | 5 | 0.000009 | 0.40 |

| South Africa | 110908 | 1 | 0.000009 | 0.38 |

| Norway | 119574 | 1 | 0.000008 | 0.36 |

| Brazil | 394107 | 3 | 0.000008 | 0.32 |

| Switzerland | 258541 | 0 | 0.000000 | 0.00 |

| Israel | 119452 | 0 | 0.000000 | 0.00 |

| Denmark | 147828 | 0 | 0.000000 | 0.00 |

| Finland | 115287 | 0 | 0.000000 | 0.00 |

| Hungary | 63662 | 0 | 0.000000 | 0.00 |

| Ukraine | 59555 | 0 | 0.000000 | 0.00 |

| Chile | 62837 | 0 | 0.000000 | 0.00 |

| Region | Publications | Misconducts | Ratio | Ratio of European |

| European | 12739113 | 299 | 0.000023 | 1.00 |

| East Asian | 4463264 | 4472 | 0.001002 | 42.69 |

| Southeast Asian | 235322 | 54 | 0.000229 | 9.78 |

| South Asian | 747844 | 39 | 0.000052 | 2.22 |

| MENAP | 778985 | 67 | 0.000086 | 3.66 |

A staggering ratio for East Asians.

-

Fanelli, D., Costas, R., Fang, F. C., Casadevall, A., & Bik, E. M. (2019). Testing hypotheses on risk factors for scientific misconduct via matched-control analysis of papers containing problematic image duplications. Science and engineering ethics, 25(3), 771-789.

It is commonly hypothesized that scientists are more likely to engage in data falsification and fabrication when they are subject to pressures to publish, when they are not restrained by forms of social control, when they work in countries lacking policies to tackle scientific misconduct, and when they are male. Evidence to test these hypotheses, however, is inconclusive due to the difficulties of obtaining unbiased data. Here we report a pre-registered test of these four hypotheses, conducted on papers that were identified in a previous study as containing problematic image duplications through a systematic screening of the journal PLoS ONE. Image duplications were classified into three categories based on their complexity, with category 1 being most likely to reflect unintentional error and category 3 being most likely to reflect intentional fabrication. We tested multiple parameters connected to the hypotheses above with a matched-control paradigm, by collecting two controls for each paper containing duplications. Category 1 duplications were mostly not associated with any of the parameters tested, as was predicted based on the assumption that these duplications were mostly not due to misconduct. Categories 2 and 3, however, exhibited numerous statistically significant associations. Results of univariable and multivariable analyses support the hypotheses that academic culture, peer control, cash-based publication incentives and national misconduct policies might affect scientific integrity. No clear support was found for the “pressures to publish” hypothesis. Female authors were found to be equally likely to publish duplicated images compared to males. Country-level parameters generally exhibited stronger effects than individual-level parameters, because developing countries were significantly more likely to produce problematic image duplications. This suggests that promoting good research practices in all countries should be a priority for the international research integrity agenda.

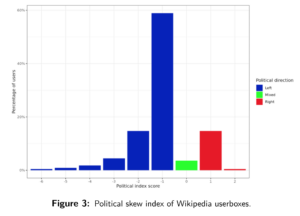

Which produced this (low quality) figure:

Data are pretty noisy, but of the ones with p<.05, we see Germany and Japan below USA, Argentina, Belgium, India, China and other above. Don’t know what is up with Belgium here, but otherwise, the results aren’t so surprising.

All in all, Hajnal pattern applies. Everybody cheats, but people outside Hajnal cheat a lot more. There are more data out there, a lot of it private. A friend of mine works with foreign applications for people who want to study in the UK (scholarships). People send in essays and the like English test scores (TOEFL etc.). The agencies screen the essays for plagiarism, so one gets a per capita rate of plagiarism. If screened OK, they are given an interview in English. Often, people with perfect essays can’t seem to talk English very well, which is generally because they hired someone to write their essays for them, which is not detectable by plagiarism testing but results in massive discrepancies between English written and spoken ability. One can also look up various cheating scandals, as summarized by Those Who Can See.

Edited to add in 2021-07-27

New study confirms what we know:

-

Carlisle, J. B. (2021). False individual patient data and zombie randomised controlled trials submitted to Anaesthesia. Anaesthesia, 76(4), 472-479.

Concerned that studies contain false data, I analysed the baseline summary data of randomised controlled trials when they were submitted to Anaesthesia from February 2017 to March 2020. I categorised trials with false data as ‘zombie’ if I thought that the trial was fatally flawed. I analysed 526 submitted trials: 73 (14%) had false data and 43 (8%) I categorised zombie. Individual patient data increased detection of false data and categorisation of trials as zombie compared with trials without individual patient data: 67/153 (44%) false vs. 6/373 (2%) false; and 40/153 (26%) zombie vs. 3/373 (1%) zombie, respectively. The analysis of individual patient data was independently associated with false data (odds ratio (95% credible interval) 47 (17–144); p = 1.3 × 10−12) and zombie trials (odds ratio (95% credible interval) 79 (19–384); p = 5.6 × 10−9). Authors from five countries submitted the majority of trials: China 96 (18%); South Korea 87 (17%); India 44 (8%); Japan 35 (7%); and Egypt 32 (6%). I identified trials with false data and in turn categorised trials zombie for: 27/56 (48%) and 20/56 (36%) Chinese trials; 7/22 (32%) and 1/22 (5%) South Korean trials; 8/13 (62%) and 6/13 (46%) Indian trials; 2/11 (18%) and 2/11 (18%) Japanese trials; and 9/10 (90%) and 7/10 (70%) Egyptian trials, respectively. The review of individual patient data of submitted randomised controlled trials revealed false data in 44%. I think journals should assume that all submitted papers are potentially flawed and editors should review individual patient data before publishing randomised controlled trials.

Table of results in detail:

Difficult data presentation, let’s re-do it:

Here of course we take a guess at the guilty people in the Western countries are likely of foreign origin. The studies aren’t named, so I didn’t check (there is an appendix that lists all studies, but no names or links). The Swiss are obviously not known for being dishonest.